The tech trade’s unofficial motto for twenty years was “move fast and break things”.

It was a philosophy that broke extra than simply taxi monopolies or lodge chains. It additionally constructed a digital world full of dangers for our most weak.

Within the 2024–25 monetary yr alone, the Australian Centre to Counter Little one Exploitation obtained practically 83,000 studies of on-line youngster sexual exploitation materials (CSAM), totally on mainstream platforms – a 41% improve from the yr earlier than.

Moreover, hyperlinks between adolescent utilization of social media and a spread of harms have been discovered, similar to adversarial psychological well being outcomes, substance abuse and dangerous sexual behaviours. These findings signify the failure of a digital ecosystem constructed on revenue slightly than safety.

With the federal authorities’s ban on social media accounts for under-16s taking impact this week, in addition to age assurance for logged-in search engine customers on December 27 and grownup content material on March 9 2026, we’ve got reached a landmark second – however we should be clear about what this regulation achieves and what it ignores.

The ban might preserve some youngsters out (in the event that they don’t circumvent it), however it does nothing to repair the dangerous structure awaiting them upon return. Nor does it take steps to switch the dangerous behaviour of some grownup customers. We want significant change towards a digital responsibility of care, the place platforms are legally required to anticipate and mitigate hurt.

The necessity for security by design

Presently, on-line security usually depends on a “whack-a-mole” method: platforms anticipate customers to report dangerous content material, then moderators take away it. It’s reactive, sluggish, and infrequently traumatising for the human moderators concerned.

To actually repair this, we want security by design. This precept calls for that security options be embedded in a platform’s core structure. It strikes past merely blocking entry, to questioning why the platform permits dangerous pathways to exist within the first place.

We’re already seeing this when platforms with histories of hurt add new options – similar to “trusted connections” on Roblox that limits in-game connections solely to folks the kid additionally is aware of in the true world. This characteristic ought to have existed from the beginning.

On the CSAM Deterrence Centre, led by Jesuit Social Service in partnership with the College of Tasmania, our analysis challenges the trade narrative that security is “too hard” or “too costly” to implement.

Actually, we’ve got discovered that easy, well-designed interventions can disrupt dangerous behaviours with out breaking the person expertise for everybody else.

Disrupting hurt

One among our most vital findings comes from a partnership with one of many world’s largest grownup websites, Pornhub. Within the first publicly evaluated deterrence intervention, when a person looked for key phrases related to youngster abuse, they didn’t simply hit a clean wall. They triggered a warning message and a chatbot directing the person to therapeutic assist.

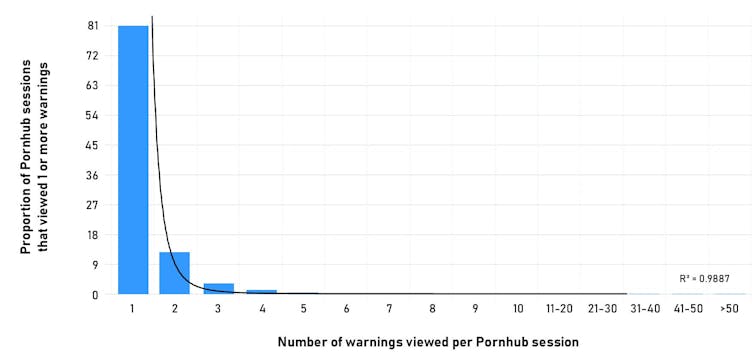

We noticed a lower in searches for unlawful materials, but additionally greater than 80% of customers who encountered this intervention didn’t try to seek for that content material on Pornhub once more in that session.

Graph displaying the variety of customers who looked for a time period associated to youngster sexual exploitation materials after receiving a warning message. Creator supplied (no reuse)

This knowledge, in step with findings from three randomised management trials we’ve got undertaken on Australian males aged 18–40, proves that warning messages work.

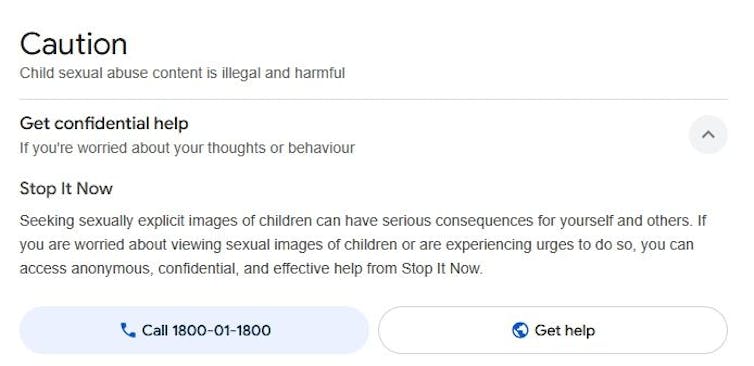

It is usually according to one other discovering: Jesuit Social Service’s Cease It Now (Australia), which supplies therapeutic providers to these involved about their emotions in the direction of youngsters, obtained a dramatic improve in internet referrals after the warning message Google reveals in search outcomes for youngster abuse materials was improved earlier this yr.

The warning that Google shows in Australia directing customers to Cease It Now in the event that they seek for unlawful materials regarding youngster sexual exploitation.

By interrupting the person’s move with a transparent deterrent message, we are able to cease a dangerous thought from turning into a dangerous motion. That is security by design, utilizing a platform’s personal interface to guard the group.

Holding platforms accountable

Because of this it’s so very important to incorporate a digital responsibility of care in Australia’s on-line security laws, one thing the federal government dedicated to earlier this yr.

As an alternative of customers coming into at their very own threat, on-line platforms can be legally accountable for figuring out and mitigating dangers – similar to algorithms that suggest dangerous content material or search capabilities that assist customers entry unlawful materials.

Platforms can begin making significant adjustments as we speak by contemplating how their platforms may facilitate hurt, and constructing in protections.

Examples embody implementing grooming detection (enabling the automated detection of perpetrators making an attempt to take advantage of youngsters), blocking the sharing of recognized abuse imagery and movies and the hyperlinks to web sites that host such materials, in addition to proactively eradicating hurt pathways that concentrate on the weak – similar to youngsters on-line with the ability to work together with adults not recognized to them.

As our analysis reveals, deterrence messaging performs a task too – displaying clear warnings when customers seek for dangerous phrases is very efficient. Tech corporations ought to companion with researchers and non-profit organisations to check what works, sharing knowledge slightly than hiding it.

The “move fast and break things” period is over. We want a cultural shift the place security on-line is handled as a necessary characteristic, not an optionally available add-on. The know-how to make these platforms safer already exists. And proof reveals that security by design can have an effect. The one factor lacking is the desire to implement it.![]()

Joel Scanlan, Senior Lecturer in Cybersecurity and Privateness, College of Tasmania

This text is republished from The Dialog beneath a Inventive Commons license. Learn the unique article.